Point Tracker

2D: Cotracker3

https://arxiv.org/pdf/2410.11831

Notation 介绍

- A sequence of T frames: \((I_t)_{t=1}^{T}, \quad \text{where each } I_t \in \mathbb{R}^{3 \times H \times W}\)

- A query point \(Q = (t^q, x^q, y^q) \in \mathbb{R}^{3}\)

- $t^q$ indicates the query frame index and $(x^q, y^q)$ represents the initial location of the query point

- Predict the corresponding point track \(P_t = (x_t, y_t) \in \mathbb{R}^{2}, \quad t = 1, \dots, T,\quad \text{with } (x_{t^q}, y_{t^q}) = (x_q, y_q)\)

- Estimates visibility \(V_t \in [0, 1]\) and confidence \(C_t \in [0, 1]\)

- Initializes: \(C_t := 0, V_t := 0\)

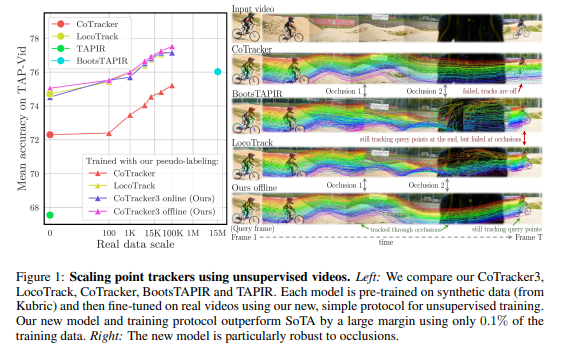

TRAINING USING UNLABELLED VIDEOS

第一步:训练Teacher models

- Employ multiple teacher models trained only on synthetic data from Kubric

- Teacher models 有: CoTracker3 online and CoTracker3 offline, CoTracker, and TAPIR

第二步: 训练Cotracker3

- 每个 batch 会随机均匀地选择一个固定的教师模型(教师模型在训练过程中不会更新)。这意味着在多个 epoch 之后,同一个视频可能会由不同的教师模型生成伪标签(pseudo-labels),这种策略有助于防止过拟合并提升模型的泛化能力。

- 在real-world 和 unlabelled videos上 不用 synthetic data

选取用于训练的查询点(Query point sampling)

跟踪器在视频上跟踪目标时,需要一个查询点。对于当前 batch 中随机选择的教师模型,我们会为每个视频采样一组查询点。

查询点的选择使用 SIFT 特征检测器,优先选择那些“易于跟踪”的点。具体流程如下:

- 在视频中随机选择 \(\hat{T}\) 数量的帧作为关键帧

- 在这些关键帧上应用 SIFT 生成初始跟踪点

使用特征提取器的直觉是:它能够检测到具有描述性的图像特征,而在遇到模糊或难以跟踪的情况时,则不会生成点。我们假设这种策略可以过滤掉难以跟踪的点,从而提高训练的稳定性。

如果 SIFT 在某帧上生成的点数量不足,我们会完全跳过该视频,以保证训练数据的质量。

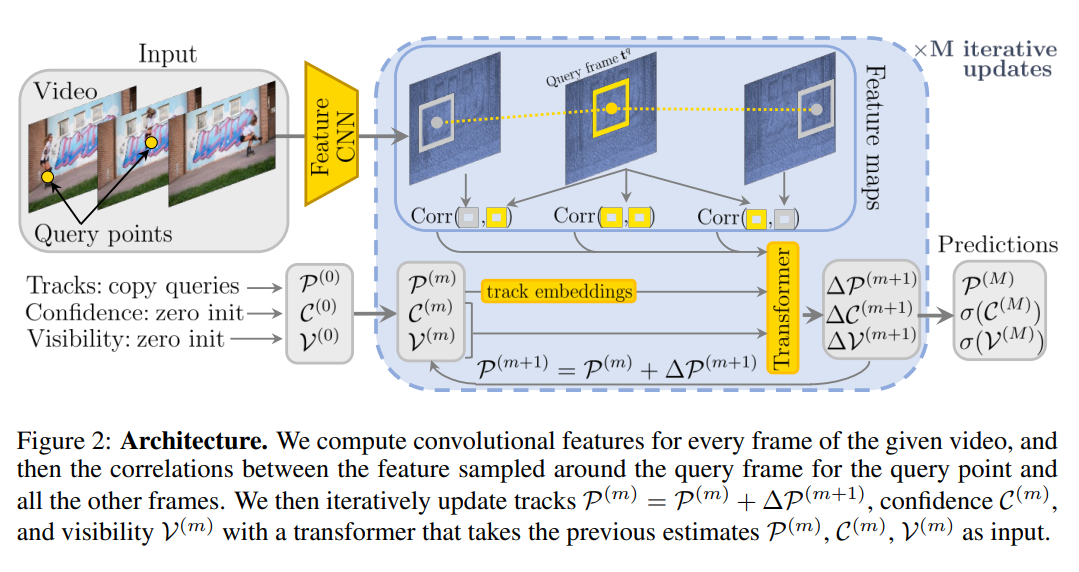

Supervision

We supervise tracks predicted by the student model with the same loss used to pretrain the model on synthetic data, with only minor modifications for handling occlusion and tracking confidence.

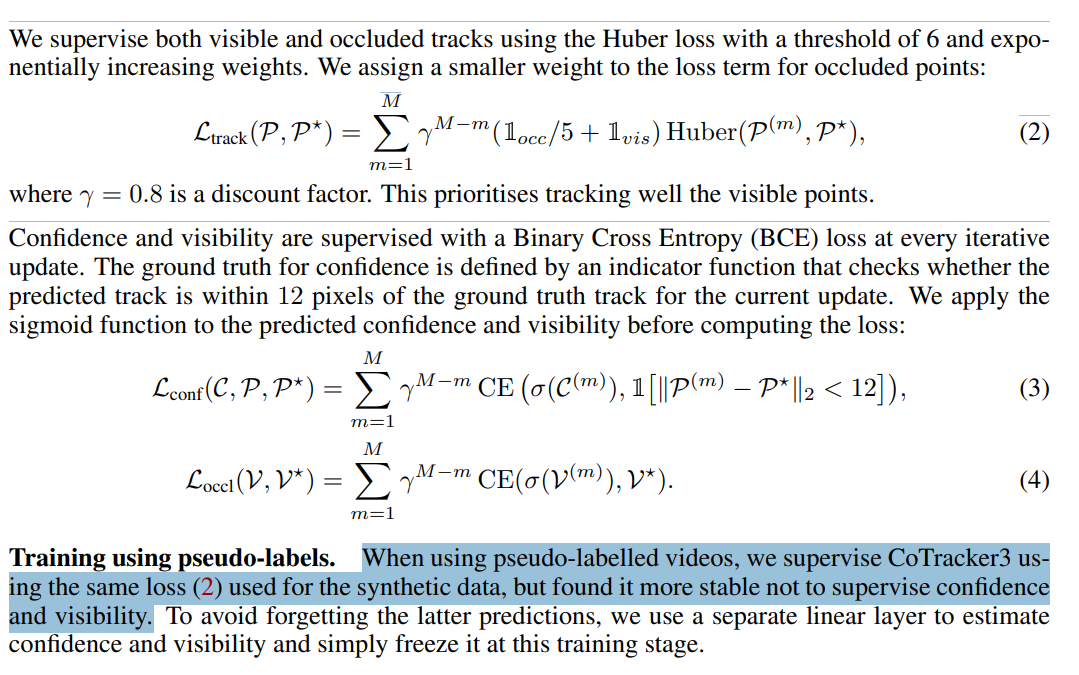

COTRACKER3 MODEL 结构

Feature maps

对每一帧都生成4个不同尺度的 feature map (CNN)

\[\Phi_s^t \in \mathbb{R}^{d \times \tfrac{H}{k^{2s-1}} \times \tfrac{W}{k^{2s-1}}}, \quad s = 1, \dots, S, \quad t = 1, \dots, T\]- 论文里 S,T都 =4

4D correlation features

- 只关注 correlation between

- query frame t^q 的 query coordinates \((x_q, y_q)\) 周边的feature map

- t时刻的 跟踪估计点 \(P_t = (x_t, y_t)\) 周边的 feature map

-

具体公式如下:

\[\phi^s_t =\big\{ \Phi^s_t \big( \tfrac{x}{ks} + \delta, \, \tfrac{y}{ks} + \delta \big) : \delta \in \mathbb{Z}, \; \|\delta\|_\infty \leq \Delta \big\} \in \mathbb{R}^{d \times (2\Delta+1)^2}, \quad s = 1, \dots, S\]

- 然后 4D correlation \(\langle \phi^s_{t^q}, \phi^s_t \rangle = \text{stack}\big( (\phi^s_{t^q})^\top \phi^s_t \big) \in \mathbb{R}^{(2\Delta+1)^4}, \quad s = 1, \dots, S\)

- 直观上,这一步是计算(compare)查询窗口每个位置\((x^q,y^q)\)的特征向量与跟踪窗口每个位置\((x_t,y_t)\)的特征向量的点积

- 投影降维(MLP)

- 对每个尺度的 4D correlation 经过 多层感知机(MLP),降到 p 维:\(\text{MLP}\big(\langle \phi^s_{t^q}, \phi^s_t \rangle \big) \in \mathbb{R}^p\)

- 最后把 S 个尺度的特征拼起来:\(\text{Corr}_t = \Big[ \text{MLP}\big(\langle \phi^1_{t^q}, \phi^1_t \rangle \big), \dots, \text{MLP}\big(\langle \phi^S_{t^q}, \phi^S_t \rangle \big) \Big] \in \mathbb{R}^{p^S}\)

Iterative update

- 初始化

- 置信度 \(C_t\) 和可见性 \(V_t\):初始化为 0

- 轨迹点 \(P_t\):所有时间步 t 的点都初始化为查询点 Q 的坐标

- 迭代更新

- 轨迹嵌入 embedding:计算每帧位移\(P_{t+1}-P_t\) 和\(P_t - P_{t-1}\) 的 Fourier Encoding → 得到轨迹特征\(\eta_{t→t+1}, \eta_{t-1→t}\)

- \[\eta_{t \to t+1} = \eta(P_{t+1} - P_t)\]

- 拼接输入 \(G_t^i = [ \eta_{t-1 \to t}^i, \; \eta_{t \to t+1}^i, \; C_t^i, \; V_t^i, \; \text{Corr}_t^i ]\)

- Forms a grid of input tokens for the transformer

- 这样就形成了一个 时间 × 查询点的 token 网格,作为 Transformer 的输入

- 每一轮迭代的增量更新(∆P, ∆C, ∆V)

- 更新后,需要重新采样每个更新点周围的特征 ϕ,重新计算 4D correlation,供下一轮迭代使用

- 轨迹嵌入 embedding:计算每帧位移\(P_{t+1}-P_t\) 和\(P_t - P_{t-1}\) 的 Fourier Encoding → 得到轨迹特征\(\eta_{t→t+1}, \eta_{t-1→t}\)

Offline and Online

- 相同点:架构一样,损失函数形式一样。

- 不同点:

- Online:滑动窗口(windowed manner),前向跟踪,损失从查询帧所在窗口开始计算,训练时用固定长度视频。

- 输入 T’ 数量的帧,预测它们的轨迹。

- 然后向前滑动 T′/2 帧,再次预测。

- 在滑动窗口之间,会利用上一个窗口输出的轨迹、置信度和可见性作为当前窗口的初始化。

- Offline:双向跟踪,所有帧都算损失,训练时用随机长度视频并做时间插值以防过拟合。

- 对所有帧都计算损失,因为它具备双向跟踪能力。

- 为了避免过拟合到某个固定长度,训练时会将视频随机裁剪到 [T/2,T‘] 范围内的长度。

- 并对时间嵌入(time embeddings)做线性插值,使模型能适应不同长度的视频。

- Online:滑动窗口(windowed manner),前向跟踪,损失从查询帧所在窗口开始计算,训练时用固定长度视频。

3D: VGGT

点云重建

https://arxiv.org/abs/2503.11651

Overview

\[f\bigl(I_i\bigr)_{i=1}^N \;=\; \bigl(g_i, D_i, P_i, T_i \bigr)_{i=1}^N\]输出介绍

- Camera parameters \(g = [q, t, f] \in \mathbb{R}^9\) (intrinsics and extrinsics)

- rotation quaternion \(q \in \mathbb{R}^4\)

- translation vector \(t \in \mathbb{R}^3\)

- 视场角/焦距: field of view \(f \in \mathbb{R}^2\)

- Depth map \(D_i \in \mathbb{R}^{H\times W}\)

- Point map \(P_i \in \mathbb{R}^{3\times H\times W}\)

- Features \(T_i \in \mathbb{R}^{C\times H\times W}\) for tracking

- It ingests the query point \(y_q\) and the dense tracking features \(T_i\) output by the transformer \(f\) and then computes the track.

Predict

- N camera

- The first camera \(g_1\) takes as the world reference frame

- The order of the images in the input sequence is arbitrary. The network architecture is designed to be permutation equivariant for all but the first frame.

- More accurate 3D points: combining independently estimated depth maps and camera parameters

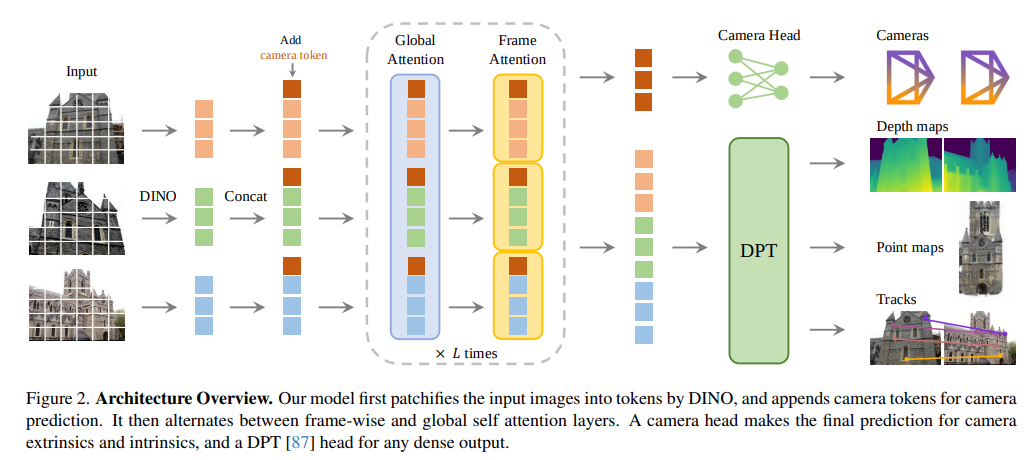

Architecture

Encoder: DINO

- Image I is initially patchified into a set of K tokens: \(t^I \in \mathbb{R}^{K\times C}\)

- The combined set of image tokens from all frames: \(t^I = \bigcup_{i=1}^{N} \{ t^I_i \}\)

- 除此之外还添加了:

- An additional Camera token: \(t^g_i \in \mathbb{R}^{1\times C’}\)

- Four register tokens \(t^R_i \in \mathbb{R}^{4\times C’}\) 存储全局信息 / 帧间对齐

- The camera token and register tokens of the first frame are set to a different set of learnable tokens than those of all other frames. (首帧和其他帧分开初始化和更新embedding参数)

Alternating-Attention

Alternating frame-wise and global self-attention layers

- Does not employ any cross-attention layers, only self-attention ones.

- 默认使用L=24 层 交替注意力(frame-wise + global)。

- 帧内注意力 (frame-wise self-attention): 每一帧单独计算 self-attention,只处理该帧的 K 个 token。只让 同一帧的 token 相互注意,跨帧 token 不参与计算

- 全局注意力 (global self-attention): 将所有帧的 token 拼在一起,一次性计算注意力。所有帧 token 都可以相互注意。

- 实现方式可以用attention mask

- 输出

- \(\hat{t}^R_i\):输出的 register token,会被丢弃。

- \(\hat{t}^I_i\) 和 \(\hat{t}^g_i\):用于后续预测。

Coordinate Frame

The first camera \(g_1\) takes as the world reference frame

The camera extrinsics output for the first camera are set to the identity, i.e., the first rotation quaternion is \(q_1 = [0, 0, 0, 1]\) and the first translation vector is \(t_1 = [0, 0, 0]\).

Prediction heads / Decoder

- Camera Predictions: using four additional self-attention layers followed by a linear layer.

- predicts the camera intrinsics and extrinsics.

- Dense Predictions:

- \(\hat{t}^I_i\) first converted to dense feature maps \(F_i \in \mathbb{R}^{C’’\times H \times W}\)with a DPT layer.

- Each \(F_i\) is then mapped with a 3×3 convolutional layer to the corresponding depth and point maps \(D_i\) and \(P_i\).

- Predict the aleatoric uncertainty \(\sum_i^D \in \mathbb{R}^{H \times W}_+ , \sum_i^P \in \mathbb{R}^{H \times W}_+\)for each depth and point map

- The DPT head also outputs dense features \(T_i \in \mathbb{R}^{C\times H\times W}\)

- Tracking

- Use CoTracker2 architecture, takes the dense tracking features \(T_i\) as input (feature map?).

- \(\mathbb{T}\Big( (y_j){j=1}^{M}, (T_i){i=1}^{N} \Big)= (\hat{y}_{j,i}){\substack{i=1,\dots,N \\ j=1,\dots,M}}\) predicts the set of 2D points in all images \(I_i\)

Training

\[L = L_{\text{camera}} + L_{\text{depth}} + L_{\text{pmap}} + \lambda L_{\text{track}}\]- λ = 0.05

- \(L_{\text{camera}} = \sum_{i=1}^{N} \| \hat{g}_i - g_i \|_{\epsilon}\) comparing the predicted cameras with the ground truth using Huber loss.

- \[L_{\text{depth}} =\sum_{i=1}^{N}\Big\|\Sigma^D_i \odot (\hat{D}_i - D_i)\Big\|+\Big\|\Sigma^D_i \odot (\nabla \hat{D}_i - \nabla D_i)\Big\|-\alpha \log \Sigma^D_i,\]

- ⊙ is the channel-broadcast element-wise product

- aleatoric-uncertainty loss weighing the discrepancy between the predicted depth and the ground-truth depth with the predicted uncertainty map \(\Sigma^D_i\)

- \[L_{\text{pmap}} =\sum_{i=1}^{N}\Big\|\Sigma^P_i \odot (\hat{P}_i - P_i)\Big\|+\Big\|\Sigma^P_i \odot (\nabla \hat{P}_i - \nabla P_i)\Big\|-\alpha \log \Sigma^P_i,\]

- \[L_{\text{track}} = \sum_{j=1}^{M} \sum_{i=1}^{N} \| y_{j,i} - \hat{y}_{j,i} \|\]

- following CoTracker2, we apply a visibility loss

Ground Truth Coordinate Normalization

- 把所有 3D 数据(点云 P、相机位置 t、深度 D)表达在首帧相机坐标系 \(g_1\)下

- \(\bar{d} = \text{mean} \big( \| P \|_2 \big)\) 计算所有点到原点的平均欧氏距离

- 用这个尺度 \(\bar{d}\) 去归一化相机平移 t、点云 P、深度 D

- we do not apply suchnormalization to the predictions output by the transformer; instead, we force it to learn the normalization we choose from the training data.

3D: SpatialTrackerv2

https://arxiv.org/pdf/2507.12462

https://spatialtracker.github.io/

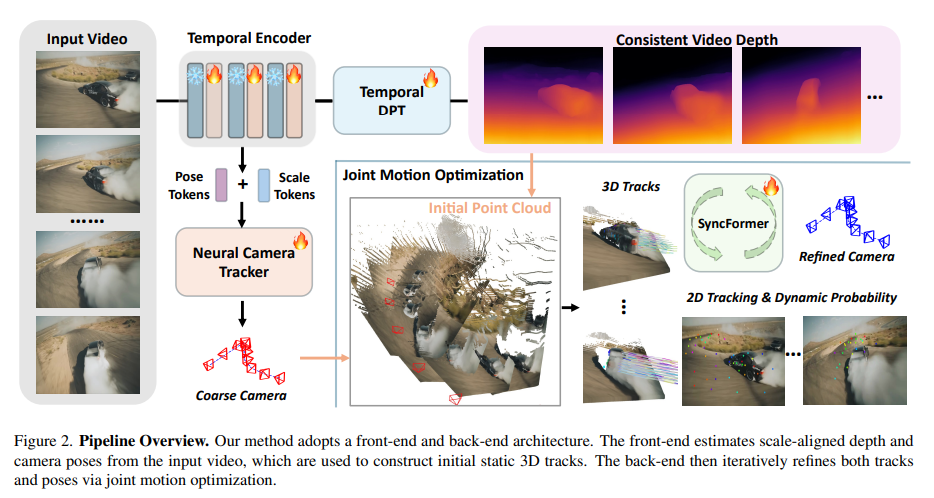

Overview

- The front end is a video depth estimator and camera pose initializer, adapted from typical monocular depth prediction frameworks with attention based temporal information encoding [VGGT]. The predicted video depths and camera poses are then fused through a scale-shift estimation module, which ensures consistency between the depth and motion predictions.

- The back end consists of a proposed Joint Motion Optimization Module, which takes the video depth and coarse camera trajectories as input and iteratively estimates 2D and 3D trajectories, along with trajectory-wise dynamics and visibility scores.

- Notation:

-

Nquery points - a video with

Tframes

-

Front End

Video Depth

Monocular depth estimation models, follow an encoder-decoder design with a vision encoder like DINO and a DPT-style decoder.

- Encoder uses an alternating attention mechanism following VGGT

- DepthAnything

- The raw results after DPT head with activation functions: \(D_{norm}\)

Camera Tracker

- Encoder: a temporal encoder using an alternatingattention mechanism following VGGT

- 在输入序列里额外插入2个 learnable embedding: \(P_{tok}, S_{tok}\) for pose and scale regression.

- 相机姿态 相关的全局特征 和 尺度/深度对齐 相关的全局特征。

- 每个 video encoder 层的输入 = 图像 patch tokens + \(P_{tok} + S_{tok}\)

- 在输入序列里额外插入2个 learnable embedding: \(P_{tok}, S_{tok}\) for pose and scale regression.

- Decode Pose

- \[P^t,\, a,\, b = H(P_{\text{tok}},\, S_{\text{tok}})\]

- \(P^t \in \mathbb{R}^{T\times 8}\) is the camera encoding parameterized by a quaternion, a translation vector, and the normalized focal length concatenated together.

-

a, balign the depth with the estimated camera poses.- 单目深度估计(Monocular Depth Estimation)只能输出 相对深度图 \(D_{\text{norm}}\), 也就是说,它能告诉你 哪个点更近,哪个点更远, 但没办法确定绝对距离

- 需要计算相机位姿对齐后的真实深度

- \[\mathcal{T}_{\text{ego}} = W\!\left(P,\, a \cdot D_{\text{norm}} + b\right)\]

- 利用相机参数 P 和对齐后的深度,可以得到由相机自运动引起的 3D 轨迹

- W(⋅) 表示一个函数:把深度和相机位姿投影到 3D 空间中,得到点的 3D 轨迹。

- \[P^t,\, a,\, b = H(P_{\text{tok}},\, S_{\text{tok}})\]

Back End

Notation

- \(\mathcal{T}^{2d} \in \mathbb{R}^{T\times N\times 2}\): 2D trajectories (point tracking set) in UV space (image space)

- \(\mathcal{T}^{3d} \in \mathbb{R}^{T\times N\times 3}\): 3D trajectories (point tracking set) in the camera coordinate system

- visibility probability \(p^{vis}\) and dynamic probability \(p^{dyn}\) for each trajectory

2D and 3D Embeddings

- For 2D embeddings, we keep the same to Cotracker3

- For 3D embeddings, \(E^{3d} = (Corr_{3D}, e^{time}, e^{Gpos}, p^{dyn}, p^{vis})\), contains the 3D correlation features \(Corr_{3D}\), the time embeddings \(e^{time}\), global position embeddings \(e^{Gpos}\) and dynamic-visibility scores.

-

- $$Corr_{3D} =\big{ K \big( \tfrac{x}{ks} + \delta, \, \tfrac{y}{ks} + \delta \big)

- \delta \in \mathbb{Z}, \; |\delta|_\infty \leq \Delta \big} , \Delta = 3$$ in paper

- Different to 2D correlations, it calculates the 3D correlations on the normalized point maps from front-end instead of depth map

- Apply harmonic positional encoding to project these relative translations into high-dimensional feature representations

- \[e_{\text{Gpos}} = \gamma \!\left( \Delta Q_{i,j} \right)\]

- \(Q^{\text{anc}}_{i,j}\) 是锚点 (anchor point)—表示同一个 3D 点在第 j 帧相机坐标系下的位置 (把点\(Q_i\)从参考帧坐标系变换到第 j 帧的相机坐标系

- \(\Delta Q_{i,j} = Q_i - Q^{\text{anc}}_{i,j}\) 计算相对位移,反映“预测位置与几何约束下的位置差多少”

- \(\gamma(\Delta Q_{i,j}) = [\, \sin(2^k \pi \Delta Q_{i,j}), \cos(2^k \pi \Delta Q_{i,j}) \,]_{k=0}^{K}\) 是用harmonic positional encoding(类似 NeRF 里的 Fourier encoding)做高维映射,得到的 embedding 具有 高频表达能力

-

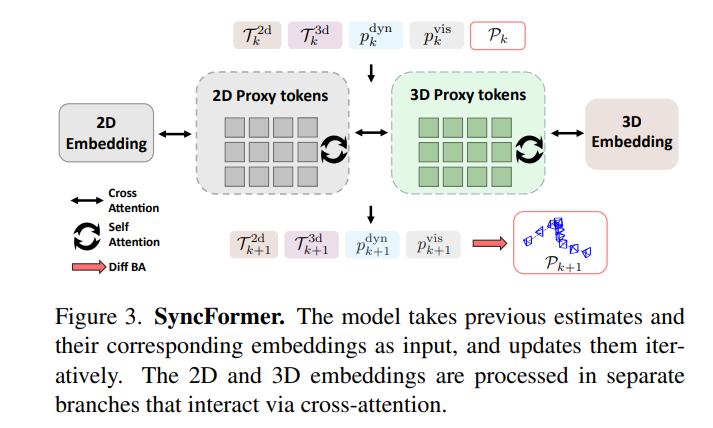

SyncFormer

In every iteration:

- takes 2D embeddings, 3D embeddings, and camera poses \(P_k\) as input

- updates the 2D trajectories \(T^{2d}\), 3D trajectories \(T^{3d}\), dynamic probabilities \(p^{dyn}\), and visibility scores \(p^{vis}\)

-

k是时间 - \[\mathcal{T}^{2d}_{k+1},\; \mathcal{T}^{3d}_{k+1},\; p^{\text{dyn}}_{k+1},\; p^{\text{vis}}_{k+1} = f_{\text{sync}}\big(\mathcal{T}^{2d}_k,\, \mathcal{T}^{3d}_k,\, p^{\text{dyn}}_k,\, p^{\text{vis}}_k,\, P_k\big)\]

- Transformer-based update function

- 2D and 3D trajectory updaters are modeled using separate attention layers

- 先用 cross-attention 把 correlation embedding 压缩成一小组 2D 和 3D proxy tokens,Proxy tokens 是低维紧凑表示

- 不直接对所有原始 token 做 attention,而是用 少量的 proxy token 来“总结”这些信息。

- 先定义少量 learnable 的 proxy token。

- 通过 cross-attention,让每个 proxy token 看原始 token(query 看 keys/values),聚合它们的信息。假设 原始token:

X;proxy tokenP- P′ = CrossAttention(Q=P,K=X,V=X)

- 使用另一个 cross-attention layer 在 proxy tokens 之间交换信息

Camera Motion Optimization ($P_{k+1}$)

Bundle Adjustment formulation,进一步优化相机姿态。

- 粗略对齐(Weighted Procrustes Analysis)

- 把 3D 轨迹 \(\mathcal{T}^{3d}_{k+1}\) 对齐到世界坐标系。

- 方法:加权 Procrustes 分析

- 权重由动态分数 \(p^{\text{dyn}}_{k+1}\) 提供

- 动态分数高的点贡献更多的对齐信息

- 公式可参考(不保真):\(\min_{R, t, s} \sum_{i=1}^{N} w_i \, \| s R \mathcal{T}^{3d}_{k+1,i} + t - P^{\text{world}}_i \|^2\)

- 可微分 → 可以直接参与训练

- 融合到世界点

- 对齐后的 \(\mathcal{T}^{3d}_{k+1}T\) 融合到世界坐标点集 \(P^{\text{world}}_{k+1} \in \mathbb{R}^{N \times 3}\)

- 动态点会根据估计的动态分数被过滤掉,只保留稳定点

- 直接 bundle adjustment

- 使用 \(P^{\text{world}}_{k+1},\; \mathcal{T}^{2d}_{k+1},\; p^{\text{vis}}_{k+1}\),优化 相机姿态(camera poses)

- 公式可参考(不保真):\(\mathcal{L}_{\text{BA}} = \sum_{i} p^{\text{vis}}_i \, \| \pi(R_k P^{\text{world}}_i + t_k) - \mathcal{T}^{2d}_{k+1,i} \|^2\)

2D and 3D trajectories are not updated via bundle adjustment

Training

- Posed RGB-D with tracking annotations:

- add the full supervisions of camera poses, video depth, dynamic segmentation and 2D, 3D tracking

- Posed RGB-D:

- leverage depth supervision to improve the video depth estimator. In addition, joint training losses are applied to ensure that the 3D tracking remains consistent with the estimated depth.

- Pose-only or unlabeled data

- Apply camera loss and joint training losses

- 使用单目深度模型 [Moge] 作为 teacher 保留相对深度精度

Enjoy Reading This Article?

Here are some more articles you might like to read next: